The goal is to make it easier for viewers to distinguish between real and synthetic media as AI tools become more widely used across social platforms and digital creation tools.

Key Takeaways:

•California, home to many leading AI companies and a trendsetter in tech regulation, is stepping in with the California AI Transparency Act.

•This Legislation is part of a larger 2025 wave of AI laws in California and positions the State as a Leader in Balancing innovation with consumer protection.

•The Law primarily targets “covered providers” Developers of Generative AI systems with over 1 million monthly users accessible in California.

•It focuses on image, video, and audio content AI Generated Content

•Some experts argue it might stifle creative uses of AI in art, education, or entertainment as over-labeling could Desensitize viewers.

California is advancing new legislation that pushes for clearer disclosure of AI-generated content, including requirements that AI-made videos must be labeled or embedded with identifiable markers.

Why This Legislation Matters Now

As generative AI tools like video synthesizers, image editors, and audio generators proliferate, the line between authentic and synthetic media has blurred dramatically. Deepfakes—realistic but fabricated videos have fueled misinformation, election interference concerns, non-consensual explicit content, and scams. Research shows many people struggle to spot AI-generated videos and often overestimate their own detection abilities.

California, home to many leading AI companies and a trendsetter in tech regulation, is stepping in with the California AI Transparency Act (originally SB 942, amended and expanded by AB 853, signed into law in October 2025). This law aims to restore trust in digital content by mandating technical and visible indicators of AI involvement.

Key Provisions of the California AI Transparency Act

The law primarily targets “covered providers”—developers of generative AI systems with over 1 million monthly users accessible in California. It focuses on image, video, and audio content (text obligations were narrowed in amendments).

- Manifest Disclosures (Visible Labels): Providers must offer users the option to add a clear, conspicuous label identifying content as AI-generated. This disclosure must be appropriate for the medium (e.g., on-screen text for videos), understandable to a reasonable person, and permanent or extremely difficult to remove.

- Latent Disclosures (Embedded Markers): AI-generated content must include hidden, machine-readable “provenance data” or watermarks. These embed details such as the AI system’s name, version, creation date/time, and a unique identifier. They must be detectable by the provider’s tools and hard to strip out.

- Detection Tools: Covered providers must make a free, public AI detection tool available. Users can upload content to check if it was created or altered by the specific GenAI system and view associated provenance data.

- Expanded Obligations (via AB 853):

- Large online platforms (social media, search engines, messaging apps) must detect available provenance data in uploaded content, provide easy user interfaces to view it, and display authenticity information starting January 1, 2027.

- Capture device manufacturers (e.g., smartphones, cameras) must eventually offer options to embed latent disclosures in human-captured content, helping distinguish authentic media. This phases in by January 1, 2028.

The original effective date of January 1, 2026, was delayed to August 2, 2026, for core provider requirements to allow better alignment with international standards like the EU AI Act.

How It Works in Practice

Imagine creating a video with a popular AI tool:

- The tool prompts or allows you to add a visible watermark or label saying “AI-Generated” with details.

- Behind the scenes, invisible metadata is embedded.

- When uploaded to a California-influenced platform, the platform can read and display this info to viewers.

- Anyone suspicious can use the provider’s detection tool to verify origins.

This “manifest + latent” approach combines human-readable warnings with technical traceability, making tampering harder while empowering platforms and users.

Benefits and Potential Impact

- Combating Misinformation: Clear labels could reduce the spread of deceptive political deepfakes or scam videos.

- Protecting Individuals: Helps victims of non-consensual deepfake pornography or defamation identify synthetic content.

- Building Trust: As AI creation tools become mainstream on platforms like social media, viewers gain confidence in what they’re watching.

- Innovation Incentive: Encourages responsible AI development with built-in transparency features, potentially influencing global standards since many tools are used worldwide.

Broader Influence on the Content Economy

- Trust and Misinformation: Clear disclosures combat election deepfakes, scams, and non-consensual content, potentially restoring faith in digital media. However, over-labeling or imperfect detection could erode trust in all content or spark “authenticity wars.”

- Economic Reallocation:

- Winners: Detection/verification startups, human-centric creators, platforms with strong provenance tech, and industries like education or legal services needing verifiable content.

- Losers: Pure AI content farms, unlabeled deepfake producers, and legacy players slow to adapt. Job shifts toward AI oversight, labeling, and ethical curation roles.

- Market size effects: The global creator economy might see slower growth in unchecked AI content but higher-value segments for transparent, high-quality production.

- Innovation Incentives: Developers must build better watermarking, provenance standards (e.g., C2PA-like frameworks), and detection. This could spur open standards, reducing fragmentation but raising barriers for smaller AI firms.

- Regulatory Precedent and Fragmentation Risks: California’s move pressures other states and the federal government. Similar bills (e.g., on political ads or deepfakes) are proliferating. Without national harmonization, creators face a patchwork of rules including labeling in California and different thresholds elsewhere thereby complicating nationwide or global operations.

- Intellectual Property and Rights: By making AI content traceable, the law indirectly aids copyright enforcement and right-of-publicity claims against unauthorized deepfakes or training data misuse, though it doesn’t directly resolve training data issues.

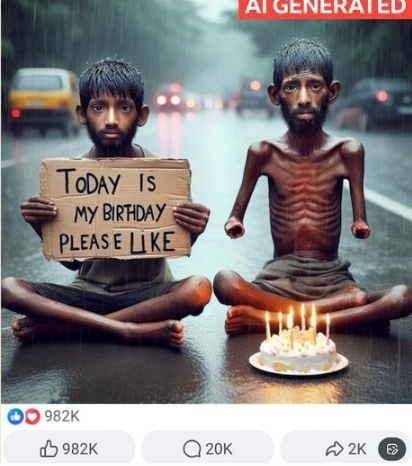

AI generated photo Sample

Overall, the law could accelerate segmentation of the content market:

Premium authentic content versus commoditized, labeled AI output. Creators skilled in disclosure-friendly hybrids or verification may thrive, while those relying on undetectable AI for scale could face headwinds.

Challenges and Unintended Consequences

- Technical hurdles: Watermarks can be stripped or defeated; detection tools aren’t foolproof, especially with adversarial AI.

- Free speech and creativity: Overly broad labeling might chill artistic expression or satirical content.

- Enforcement: Penalties (civil fines, potentially up to thousands per violation) and platform liability could lead to over-cautious moderation.

- Global vs. local: Non-U.S. companies may limit California access or apply rules unevenly.

California’s approach complements other efforts, such as rules for election-related deepfakes and companion chatbots that must disclose they are AI.

Challenges and Criticisms

- Technical Feasibility: Ensuring watermarks are truly permanent and detectable across editing tools remains complex. Adversarial attacks could try to remove them.

- Compliance Burden: Smaller developers or global platforms may face costs, though the law targets high-usage systems. Contractual requirements extend obligations to licensees.

- Enforcement: Violations can result in civil penalties up to $5,000 per violation, enforced by the Attorney General or local officials, plus attorney fees.

- Global Reach: Since many AI tools are accessed in California, the law could have extraterritorial effects, prompting similar rules elsewhere but raising questions about consistency.

Ripple Effects on Platforms and Distribution

Major platforms (Meta, Google, X, TikTok, etc.) operating in or serving California users will bear significant costs:

- They must integrate detection systems, retain Metadata, and provide user-facing authenticity interfaces.

- This may lead to algorithmic changes: prioritizing labeled authentic content, demoting unlabeled synthetic material, or adding warnings that reduce engagement for AI-heavy posts.

- Monetization shifts: Ad revenue tied to viral deepfakes or misleading content could decline. Conversely, trusted platforms investing in robust verification tools might attract more premium advertisers and users.

California’s AI Leadership

This legislation is part of a larger 2025 wave of AI laws in California, including training data transparency requirements and frontier AI risk disclosures. It positions the state as a leader in balancing innovation with consumer protection, much like its pioneering privacy laws (e.g., CCPA).

Some argue it might stifle creative uses of AI in art, education, or entertainment, or that over-labeling could desensitize viewers. Others worry about free speech implications, though the law focuses on disclosure rather than bans.

As AI-generated videos flood social platforms, expect similar pushes at the federal level and in other states. For now, California is setting a precedent: transparency isn’t optional—it’s becoming embedded in the technology itself.

What Comes Next?

Covered companies are already preparing systems for August 2026 compliance. Content creators should familiarize themselves with labeling options in their tools. Viewers can look forward to more informed consumption of media.

In an era where “seeing is believing” no longer holds, California’s push for clearer AI disclosures represents a practical step toward digital literacy and accountability. It won’t solve every deepfake problem overnight, but it makes synthetic content harder to hide and real content easier to trust.

Could We See A More Transparent Content Future?

California’s AI Disclosure framework won’t eliminate AI-generated content—it will make it identifiable. This transparency could professionalize the content economy, rewarding authenticity while allowing AI to augment (rather than fully replace) human creativity.

In the long run, expect:

- Standardized provenance protocols adopted industry-wide.

- New business models around “verified human” or “AI-transparent” content.

- Greater public literacy about synthetic media.

As enforcement ramps up post-2026, the content economy will adapt, much like it did to social media algorithms or GDPR. The result may be a healthier digital ecosystem where audiences can better distinguish signal from synthetic noise, ultimately benefiting creators who prioritize trust over deception.

For businesses and creators in the space, proactive steps—auditing AI tool usage, integrating labeling workflows, and monitoring platform policies will be essential. California’s experiment could define the rules of engagement for the AI-augmented internet.

What do you think? will visible AI labels become as common as “Sponsored” tags on social media? Share your thoughts in the comments section below 👇

Leave a Reply