In a major leap forward for neurotechnology, researchers at the University of California, Davis have created a brain-computer interface (BCI) that translates Brain signals directly into spoken words in near real time.

Key Takeaways:

•New Neurotechnology AI Chip helps Impaired Man to Speak again

•Doctors Implanted the Medical AI Chip into his Brain helping the amyotrophic lateral sclerosis (ALS) patient to speak again

•Medical Experts call this Technology a “Wonderful art of God”

Researchers created a synthetic voice that perfectly mimicked a participant’s own speech and delivered his intended words just 10 milliseconds after detecting the brain signals indicating his desire to speak.

Detailed in the journal Nature, the system represents a dramatic advance over previous brain-computer interfaces (BCIs), which took three seconds to begin streaming speech or only generated output once the user had finished silently miming a full sentence.

For people who have lost the ability to speak due to conditions like amyotrophic lateral sclerosis (ALS), this could mean the return of natural, fluid conversation.

The team made the voice sound natural and personal by training artificial intelligence on pre-illness recordings of interviews the man had given before his condition took hold.

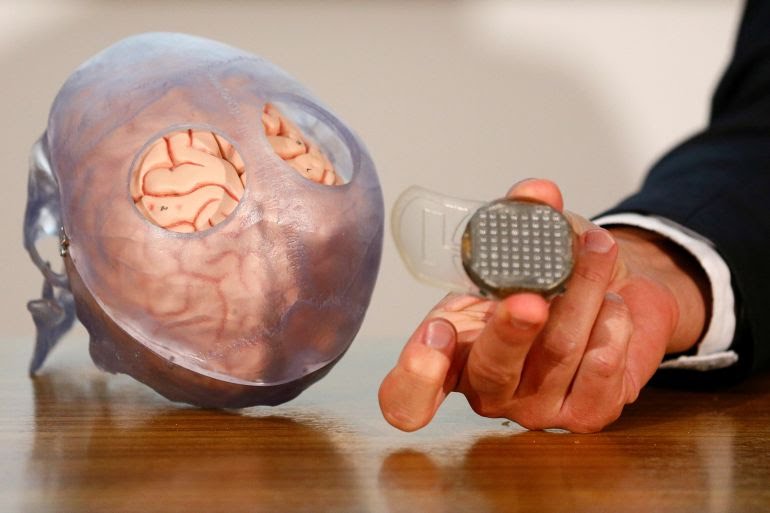

The system was tested on a man with ALS who could no longer speak. Surgeons implanted 256 electrodes into the specific brain regions responsible for speech production. These electrodes captured the neural activity associated with his intended words, expressions, and even vocal nuances like pitch and tone.

Using auditory feedback system, the patient could consciously raise the tone’s pitch to signal “yes” or lower it to signal “no.” Exactly how the brain accomplishes this precise modulation remains a mystery, according to researcher Birbaumer.

The implanted electrodes recorded neuronal firing patterns, which were amplified by an external device attached to the skull and then processed by custom software that translates the signals into usable output.

“This is the holy grail in speech BCIs,” said Christian Herff, a computational neuroscientist at Maastricht University in the Netherlands, who was not involved in the work. “This is now real, spontaneous, continuous speech.”

What makes this breakthrough truly remarkable is its speed. Previous BCI speech systems required several seconds to decode thoughts and generate audio. The UC Davis technology produces voice output in just 25 milliseconds fast enough to support real-time back-and-forth dialogue, interruptions, tone adjustments, and natural conversational flow.

How the System Was Trained?

Brain-computer interface technology for helping ALS patients communicate has become more common in recent years. BCIs work by capturing brain signals, sending them to a computer, and converting them into commands. While the approach has worked for patients who still retain some eye movement, it had not performed nearly as well in people with CLIS and total-body paralysis—until now.

In the new study, surgeons implanted two tiny microchips—each about 1.5 mm across—into the patient’s motor cortex, the brain area that controls movement.The electrodes inside the brain detected changes in neural activity: an increase triggered a rising audio tone from a connected computer, while a decrease produced a falling tone. Within two days, the man learned to control the pitch of the tone at will.

Researchers implanted two microchips in the man’s brain [File: Emmanuel Foudrot/Reuters]

To teach the AI how to interpret his brain signals, the participant was shown thousands of sentences on a screen and asked to attempt speaking them silently (using only his brain’s motor commands). While he did this, the implanted electrodes recorded his neural patterns in detail.

To demonstrate flexibility, the participant was asked to produce simple sounds such as “aah,” “ooh,” and “hmm,” as well as entirely made-up words. The BCI reproduced them all accurately, proving it could generate speech without being limited to a fixed vocabulary.

An advanced AI model then learned to map specific brain activity to:

- Individual words

- Sentence structure

- Pitch

- Intonation

- Emotional expression

Researchers also used earlier audio recordings of the man’s voice (made before his condition progressed) to synthesize a personalized digital voice that closely resembles his original speech. The result isn’t robotic or generic — it sounds like him.

Impressive Real-World Testing

In clinical tests, the participant was able to:

- Form complete, fluent sentences

- Produce natural filler sounds like “hmm” or “eww”

- Change pitch and attempt simple singing

The system didn’t just decode words, it captured how he wanted to say them, restoring a deeply personal aspect of communication that many previous technologies could not achieve.

A Promising Future for Communication Restoration

When ALS patients lose the ability to speak, they typically rely on eye-tracking devices to select letters on a screen. In later stages, they can answer yes-or-no questions using only tiny eye movements. Before the disease progresses further, family members would hold up a letter grid against a four-color background, pointing to each section while interpreting any eye movement as a “yes.”

While this technology is still experimental and limited to a single participant so far, the results represent a significant step toward restoring real-time, natural speech for millions of people living with paralysis, ALS, stroke, or other speech-impairing conditions.

This UC Davis BCI demonstrates that thought-to-speech translation can be fast, expressive, and personalized — moving us closer to a world where losing the ability to speak no longer means losing your voice.

The study, published in Nature Communications, is the first to enable a person with complete locked-in syndrome (CLIS)—someone who is fully conscious and mentally sharp but completely paralyzed—to communicate in full sentences.

Disclaimer!

This publication is made for Educational and awareness purposes. It is not made for the sale of any product or service. The information provided here are based on verified human aided research and studies

Leave a Reply