Over 200 child advocacy groups and experts, led by Fairplay, have sent an open letter to YouTube CEO Neal Mohan and Google CEO Sundar Pichai demanding a complete ban on AI-generated “slop” videos from YouTube Kids.

Key Takeaways:

•Over 200 Advocacy Groups Demanding a complete Ban on AI-generates “Slop” videos from YouTube Kids.

•Experts decry AI generated Videos creating erosion of trust, Disruption of Creator economy, spreading Misinformation and harming Children Development

•Youtube, Meta begins crack down on AI Slop Videos however, it appears there is still more to be done

The Letter, signed by organizations including the American Federation of Teachers and Researchers Jonathan Haidt, argues these cheap, algorithmically produced videos hijack children’s attention and distort their understanding of reality.

Remember juries in California and New Mexico Last Month delivered significant blows to social media giants Meta and Google (Alphabet) holding the companies liable for harms linked to their platforms’ design and practices affecting young users.

After over 40 hours of deliberations, the jury awarded $3 million in compensatory damages (with Meta responsible for 70% and Google/YouTube for 30%) plus $3 million in punitive damages (Meta: $2.1 million; Google: $900,000), for a total of $6 million. This treats social media apps as potentially “defective products,” akin to how tobacco or gambling products have been scrutinized.

Today, Research shows that Top AI slop channels targeting kids reportedly earn over $4.25 million annually.

What is AI Slop?

AI “slop” refers to Low-quality, mass-produced content often videos generated quickly and carelessly with generative AI tools.

It prioritizes volume and algorithmic engagement (views, watch time, ads) over originality, accuracy, or value.

Think about AI Slop as repetitive, bizarre, or surreal clips like endless cartoon animals in odd scenarios, AI-narrated “facts,” plotless kids’ animations, or clickbait “documentaries.” The term highlights the flood of soulless, low-effort output that’s clogging platforms.

What is AI slop and why it matters for video creators – Artlist Blog

This issue has exploded globally since around 2024–2025, driven by accessible tools for text-to-video, voice synthesis, and editing. Platforms like YouTube, TikTok, Facebook, and Instagram amplify it because algorithms reward consistent, high-engagement uploads even if the content feels empty or weird.

Scale of the Problem

Research from video company Kapwing (late 2025) found that 20–33% of videos shown to new YouTube users qualify as low-quality AI content or “Brainrot” (mind-numbing, addictive filler). About 21% of the first 500 Shorts for a fresh account were pure AI slop. Nearly 10% of the platform’s fastest-growing channels were AI-only, racking up tens of billions of views and generating an estimated $117 million in annual ad revenue across hundreds of such channels.

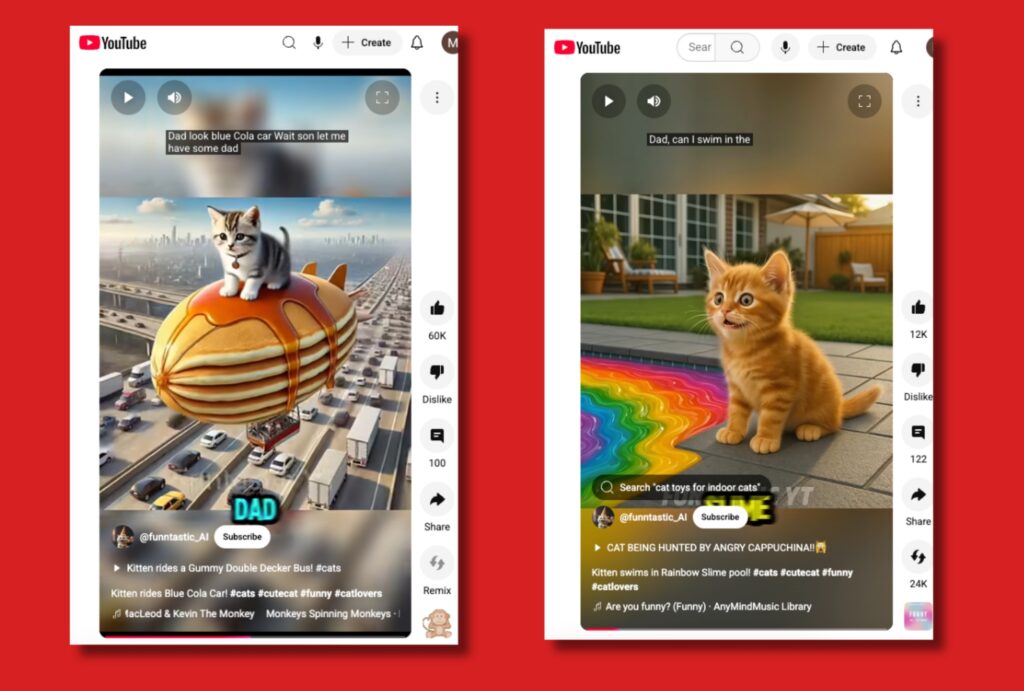

“AI Slop” Representative Videos – Youtube

YouTube’s CEO acknowledged the “low-quality, repetitive content” issue in early 2026, and the platform has removed billions of such videos while testing viewer feedback prompts like “Does this feel like AI slop?”

Key Global Issues Here are the main problems with AI slop videos, backed by reports and expert concerns:

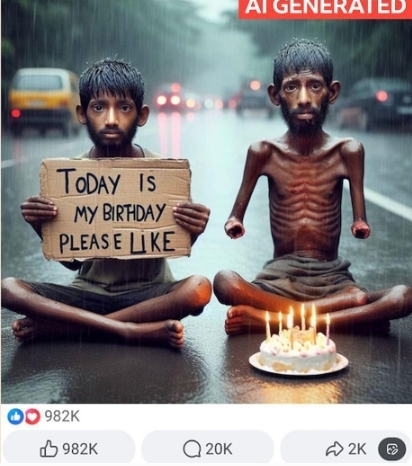

1. Erosion of Trust and “Reality Apathy”When feeds overflow with unconvincing or surreal videos, people start questioning everything online. This “flood the zone” effect exhausts critical thinking meaning users may dismiss real events as fake or accept misleading ones. Deepfakes (more polished synthetic media) blur further into slop territory, supercharging misinformation. Examples include AI videos of Fake Disasters, Political events, Investment Schmes or Conspiracies that complicate real emergencies (e.g., flooding reports muddied by fabricated clips). Deepfake incidents surged dramatically, with fraud losses in the hundreds of millions.

The “AI Slop” Video Boom: Why the Internet Can’t Look Away | by RoyC Frontiers | Medium

2. Impact on Children and DevelopmentAI slop heavily targets kids’ content: channels pumping out 50+ videos daily of repetitive songs, toys, or surreal animations (far outpacing legacy creators like Sesame Street). Over 200 experts, child advocates (including Jonathan Haidt), and groups petitioned YouTube in 2026 to ban or heavily restrict it on YouTube Kids, arguing it distorts reality, overwhelms young brains, promotes “Brain rot,” and hinders learning. Some channels earn millions annually from this. Parents and psychologists call it a “monster problem” with few guardrails.

3. Disruption of the Creator Economy and Quality Content Human creators report declining views as algorithms push cheap, high-volume AI output. Genuine channels in niches like history, science, or essays get buried. Slop farms (operations cranking out content for ad revenue) can net creators thousands monthly, but they degrade the platform’s overall value. Advertisers face brand-safety risks when ads appear next to bizarre or low-trust videos, and surveys show consumer trust drops sharply for perceived AI content.

4. Misinformation, Scams, and Societal Harm AI Slop enables cheap, scalable falsehoods—fake news clips, sensational “true crime,” or politically charged videos (e.g., violent or misleading immigration/deportation animations). It supercharges spam and scams, including deepfake-style fraud. In extreme cases, channels post shocking content for virality. Broader effects include reduced trust in media and potential mental health strain from constant distortion of reality.

AI ‘slop’ is transforming social media – and there’s a backlash – BBC News

The coalition wants mandatory AI labeling, algorithmic restrictions for under-18 users, and an end to YouTube’s investment in AI children’s content.

This goes in line with California’s stepping in with the California AI Transparency Act (originally SB 942, amended and expanded by AB 853, signed into law in October 2025). This law aims to restore trust in digital content by mandating technical and visible indicators of AI involvement in AI Generative Videos, Audios and Pictures.

The 200 child advocacy groups and experts, led by Fairplay, demand a complete ban on AI-generated “slop” videos from YouTube Kids.

However, Critics argue that social media provides connection, creativity, and information access, and individual responsibility (parental oversight) plays a role.

Defenders of the companies point to existing tools like time limits and family pairing. Yet the juries’ findings based on evidence of known harms—suggest design decisions signalling exploitation of vulnerable young brains.

Looking to the Future

For parents, educators, and policymakers, the message is clear: awareness of AI generated risks is growing, and platforms may soon operate under greater scrutiny.

The full impact will unfold through appeals, additional trials, and potential legislation. One thing is certain, the era of unchecked “move fast and break things” when it comes to young users’ well-being appears to be ending.

Platforms have Started Reacting:

- YouTube Removed Billions of Low-quality videos, improved detection, added viewer flagging, and works on reducing repetitive content. Critics say it’s not enough, as financial incentives remain.

- Others (Meta, Pinterest, etc.) Some Labeling or opt-outs, but algorithms often still boost slop for engagement.

- Public backlash is visible: Comment sections call out AI content, accounts track ” AI slop” examples, and terms like “slop” became culturally prominent (even Merriam-Webster’s 2025 Word of the Year in some contexts). Users report “AI fatigue,” spending less time online.

Challenges persist because detection is imperfect (AI improves fast), enforcement lags volume, and ad-driven business models reward quantity.

Broader Implications

AI slop videos highlight tensions in the generative AI era: incredible creative potential versus incentives for junk. It risks turning the internet into a “wasteland” of meaningless filler, crowding out authentic voices, harming vulnerable groups (especially kids), and weakening shared reality. Solutions discussed include stronger labeling/disclosure rules, algorithm changes prioritizing quality/human signals, ad revenue penalties for low-effort content, and better tools for users to filter it.

On the positive side, awareness is rising — people spot AI slop more easily (weird artifacts, repetitive patterns, unnatural motion/voices), and high-quality human content can stand out more as audiences seek authenticity.

Leave a Reply