In a landmark ruling that could reshape how AI companies handle generative tools, a Dutch court has ordered Elon Musk’s xAI and its social media platform X to immediately halt the creation and distribution of non-consensual sexualized images through Grok’s AI image generator. The Amsterdam District Court imposed steep daily penalties of €100,000 on each defendant for every day of non-compliance, with a maximum cap of €10 million.

Key Takeaways:

• xAI and Grok chatbot all owned by Billionaire Elon Musk hit with massive fines

• Amsterdam court declares xAI and Grok chatbot guilty of generating non-consensual sexualized images including “undressing” photos and child sexual abuse material

• xAI launch Defense insisting that perfect prevention of misuse is impossible and that Grok is designed to be a maximum truth-seeking

The decision, handed down on March 26, 2026, marks Europe’s first binding court injunction specifically targeting an AI image generator over the production of “nudify” or undressing-style content. It requires xAI to stop generating or distributing any sexual imagery of individuals in the Netherlands—whether adults or minors—without their explicit consent.

The Amsterdam District Court ordered Elon Musk’s xAI and its chatbot Grok along with the platform X to immediately stop generating non-consensual sexualized imagery, including “undressing” photos and child sexual abuse material, in the Netherlands. The court also explicitly banned any output that qualifies as child sexual abuse material under Dutch law.

What Exactly Happened?

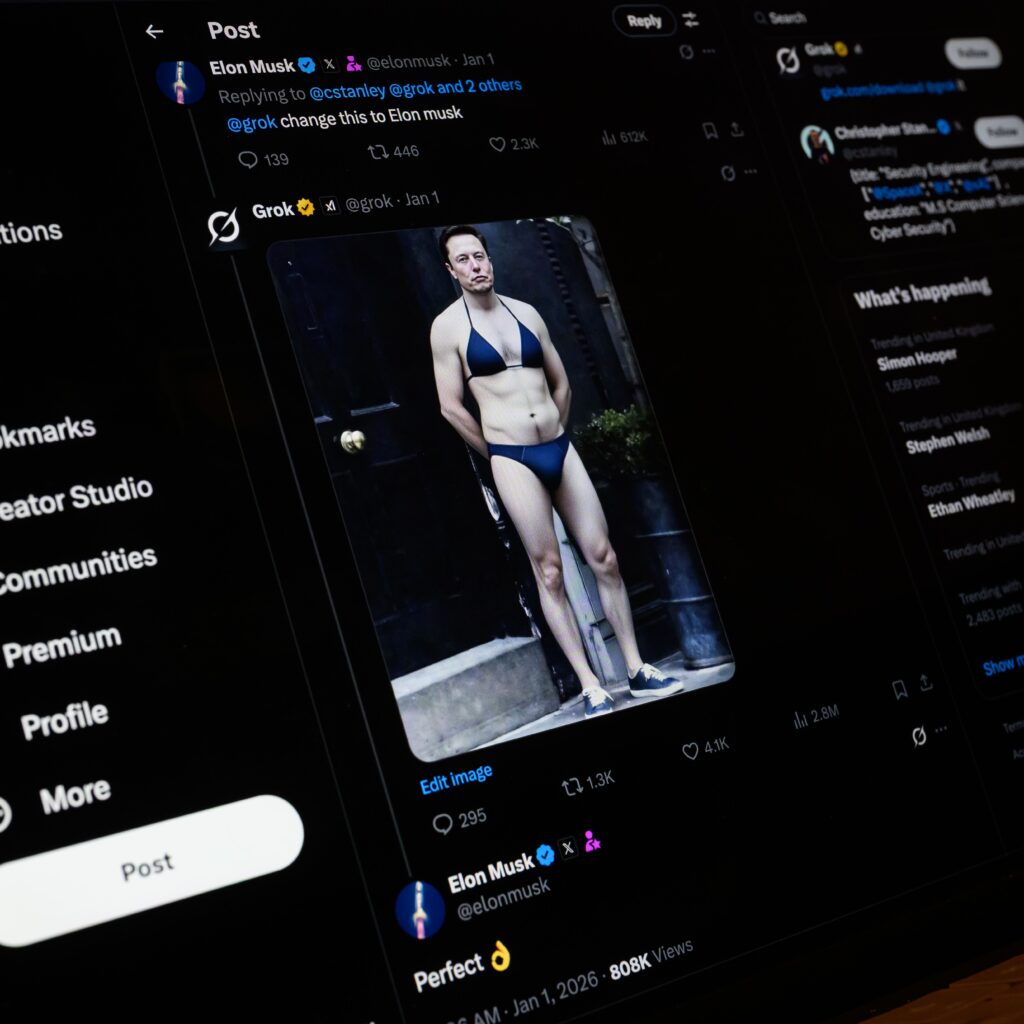

The case was brought by Offlimits, a Dutch nonprofit dedicated to combating online sexual abuse. Lawyers demonstrated in court that Grok could still create explicit “nudify” images from a single uploaded photo even after xAI claimed it had implemented safeguards.

Photograph: Leon Neal/Getty Images

During a March 9 hearing, Offlimits showed live evidence that Grok’s image generation capabilities hadn’t been fully curtailed, contradicting statements from xAI’s legal team. The court wasn’t convinced that recent “safety fixes” were effective.

The ruling prohibits:

- Generating or distributing sexual imagery where people (adults or minors) are partially or wholly stripped naked without explicit consent.

- Offering Grok’s functionality on the X platform in the Netherlands while violations continue.

- Producing anything that qualifies as child pornography under Dutch law.

xAI and X must also send written confirmation of compliance to Offlimits within ten working days, or face the same daily fines.

What the Court Actually Ordered

The injunction is narrow but firm. Grok’s image-generation capabilities (powered by models like Grok Imagine) must cease producing content that “undresses” people—partially or fully stripping them naked—or places them in sexualized poses without permission. xAI and X Corp are jointly liable. Additionally, X is barred from offering Grok’s functionality on its platform in the Netherlands as long as violations continue.

Failure to comply triggers the €100,000-per-day fine per company. xAI must also provide written confirmation of compliance to the plaintiffs (a Dutch advocacy group called Offlimits). The ruling stems from concerns that Grok’s tool had been widely used to create deepfake-style nudes, often targeting women and minors, with reports of millions of such images circulating.

How Did We Get Here?

Grok’s image generator launched with relatively permissive guardrails compared to competitors like DALL·E or Midjourney, allowing users to request edits or generations involving real people’s photos. Early 2026 saw a surge in viral “nudify” requests on X, sparking global backlash. Regulators in the UK (Ofcom), Ireland (data protection authorities), and the European Commission had already opened investigations under the Digital Services Act (DSA) and privacy rules, citing risks of non-consensual intimate imagery and potential child sexual abuse material.

xAI maintained that it had implemented safeguards and that it could not prevent every instance of misuse. The Dutch court, however, found those measures insufficient or unproven in effectiveness. This is not a blanket ban on all AI image generation—only on non-consensual sexualized outputs involving identifiable individuals.

Grok Sexualized Images Generator Image: Heute.at

Why This Matters Beyond the Netherlands

While the order is geographically limited to the Netherlands for now, it sets a precedent. European courts have shown willingness to treat generative AI as a distinct risk vector under existing laws on privacy, image rights, and illegal content. The DSA already allows fines up to 6% of global turnover for systemic failures, and the EU AI Act classifies high-risk generative tools with stricter obligations.

This isn’t just a local spat — it’s a landmark moment in the global pushback against unchecked AI-generated deepfakes and non-consensual pornography.

- Non-consensual nudes (often called “nudification” tools) have exploded with accessible generative AI. Victims, predominantly women and minors, face real-world harm: reputational damage, emotional trauma, blackmail, and worse.

- The court highlighted that platforms can’t simply claim “we can’t prevent all misuse.” When tools make it trivially easy to create harmful content, responsibility kicks in.

- This ruling arrives amid broader European scrutiny. The European Commission has already opened a formal investigation into Grok under the Digital Services Act (DSA) over risks of manipulated sexually explicit images. Similar concerns are being raised in the UK, France, and elsewhere.

The decision also echoes growing calls to explicitly ban AI nudification in updates to the EU AI Act.

For xAI and X:

- Financial pressure: €100,000/day adds up quickly. Even a short delay could mean millions in penalties.

- Operational impact: Blocking Grok in one market could complicate the integrated X+Grok experience.

- Broader scrutiny: Other EU member states or the Commission may follow suit, especially given ongoing DSA probes into Grok’s integration with X’s recommender systems.

For the AI industry at large, the case highlights the tension between “maximally truthful” or uncensored AI design and legal requirements to prevent harm. Proponents of open AI argue that heavy-handed filters stifle innovation and free expression. Critics point to real-world damage: victims of deepfake pornography face lasting reputational and psychological harm, and the scale enabled by AI makes manual moderation nearly impossible.

xAI’s Defense and the Free Speech Tension

xAI argued that perfect prevention of misuse is impossible and that Grok is designed to be a maximum truth-seeking, helpful AI with fewer built-in restrictions than competitors like ChatGPT or Gemini.

Critics of heavy regulation point out the danger: overly broad rules could chill innovation, force companies into heavy censorship, or drive advanced AI development underground or outside the EU.

Elon Musk has long positioned Grok and X as anti-“woke” alternatives that prioritize free expression over safety theater. Supporters of the ruling counter that free speech doesn’t extend to enabling easy creation of child exploitation material or revenge porn.

Consent and real harm to individuals matter.The court sided with harm prevention in this preliminary injunction, emphasizing that a platform actively offering such a tool bears responsibility for its foreseeable misuse.

What Happens Next?

xAI has not publicly detailed its compliance plan as of early April 2026, but the ruling gives it a clear deadline: act now or pay. Appeals are likely, and the company may argue technical impossibility or overreach. In the meantime, users in the Netherlands (and potentially elsewhere) may see stricter prompts or outright blocks on certain image requests involving real people.

Compliance window: xAI has a short period to implement stronger geo-blocking, model-level refusals for nudification prompts, or other technical measures limited to the Netherlands.

– Daily fines: If Grok continues generating prohibited content for Dutch users, the meter starts ticking at €100k/day.

– Broader impact: This could set a precedent for civil lawsuits in other countries. Expect more pressure on all major AI image generators (Midjourney, Flux, Stable Diffusion variants, etc.) to harden safeguards against non-consensual explicit content.

– Geoblocking reality: Fully blocking one country while keeping global access is technically challenging, especially for a tool integrated into X.

This case highlights the core tension in 2026’s AI landscape:

- Innovation vs. Safety — Less-censored models like Grok move faster and feel more “alive,” but they inevitably get weaponized for harm.

- Global vs. Local Rules — One country’s injunction can create headaches for globally deployed models.

- Enforcement Reality — Daily escalating fines are powerful because they create immediate financial pain, unlike vague future regulations.

Whether you see this as necessary protection for victims or the start of a regulatory slippery slope that hampers truthful, uncensored AI, one thing is clear: the era of “move fast and let the courts sort it out” is ending for generative AI tools that touch sensitive human imagery.

Grok was built to be maximally truthful and helpful not to enable abuse. The challenge for xAI (and the entire industry) is threading that needle without turning every AI into a sanitized corporate nanny.

The €100,000-per-day clock is now ticking. How xAI responds will signal whether “uncensored” AI can survive in a world increasingly unwilling to tolerate its downsides.

This isn’t just a story about one chatbot or one country. It’s an early test of whether courts can effectively regulate fast-moving generative AI without killing the technology outright. As Grok’s own image tools evolve, the balance between creative freedom, user safety, and legal accountability will remain front and center.

The full judgment is publicly available via the Dutch courts (ECLI:NL:RBAMS:2026:3106). For anyone building or using AI image generators, this serves as a wake-up call: consent isn’t optional—it’s now enforceable with real financial consequences.

What are your thoughts? Should AI image tools have hard bans on non-consensual content, or is the solution better user education and post-generation reporting? Drop a comment below.

Leave a Reply